What does the computing landscape look like in a decade ?

In a word, bifurcated.

At the individual level there will be range of battery powered devices; watches, mobile phones, tablets with removable keyboards, and those without. They will be numerous, at a wide range of price points, allowing them to be dedicated to the individual. A personal computer if you will.

Of course these devices will have to always be connected to a network and the eponymous cloud, and thus the other half of the puzzle. If you think you’re going to be able to walk downstairs in a decade and touch the hardware your software runs on — you’re in for a rude shock.

What happened to the middle ?

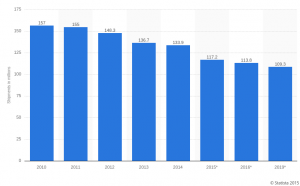

Well, Steve Jobs blew up the desktop market, and with it the outlook for PC shipments.

But, but, I hear you say. You, the reader, might have a desktop computer for gaming, or enjoy software development on a workstation, rather than a laptop, or your phone.

That’s fine, nobody said you’re wrong, but you are increasingly a minority, and the economics of scale are not working in your favour.

What about us developers ?

Yongsan Electronics Market. Korea’s strategic reserve of whitebox desktop PCs stretches to the horizon.

So we know where the hardware is going, but a16z says software is eating the world. Who’s going to write all this software ? And if there are no desktop computers, how ?

Maybe, companies like Nitrous.io (now defunct) and Koding are right, and we’ll all be using online tools. In which case tablets with all day battery life and WiFi are the ticket — the market is certainly betting on that.

But I think there are serious and persistent problems with the idea of always on that cannot be fixed with money.

Broadband cellular or WiFi data has an upper limit, sure you can stack channels to make a single TCP flow go faster, but when everyone wants fast flows — and good upload bandwidth, will that scale to cities with tens of millions of individual ? Will it scale to people who don’t want to live in said Megalopolis ?

Probably not.

The other outcome is the developer PC continues to exist, in an increasingly rarified (and expensive) form as workstations migrate to the economies of scale that drive server chip sets.

This is what video editing looks like today, part PC, mostly custom packaged single use solution. Imagine what it would look like if this was what was required to produce software ?

How will you be programming in a decade ?