I’ve been doing a lot of work with gccgo recently and with the upcoming release of Go 1.2 I’ve also been collecting benchmark results for that release.

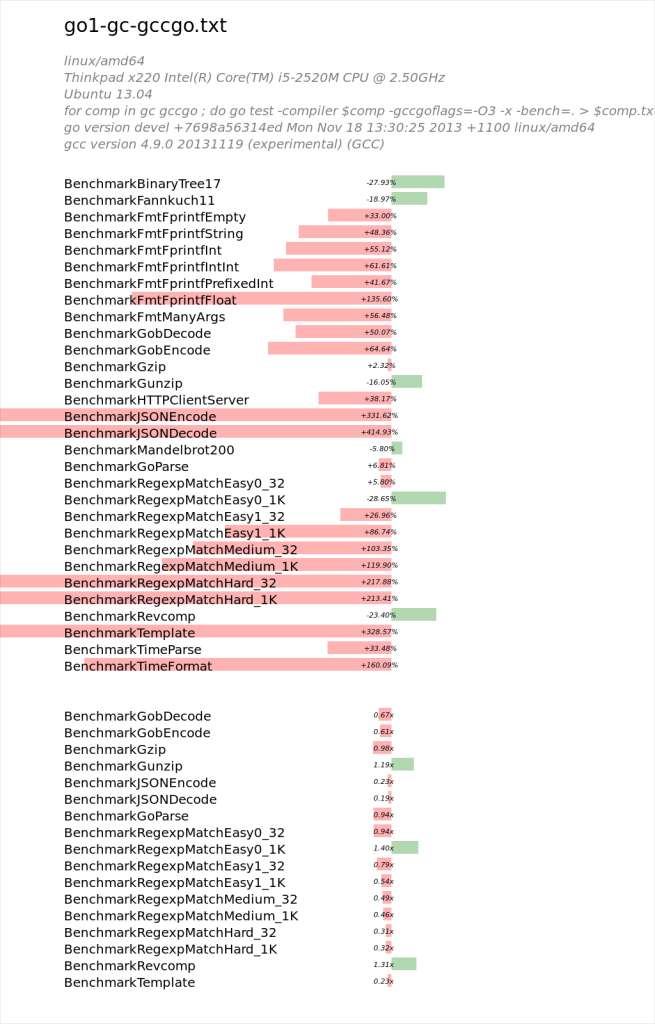

Presented below, using a very unscientific method, are the results of comparing the go1 benchmark results for the two compilers.

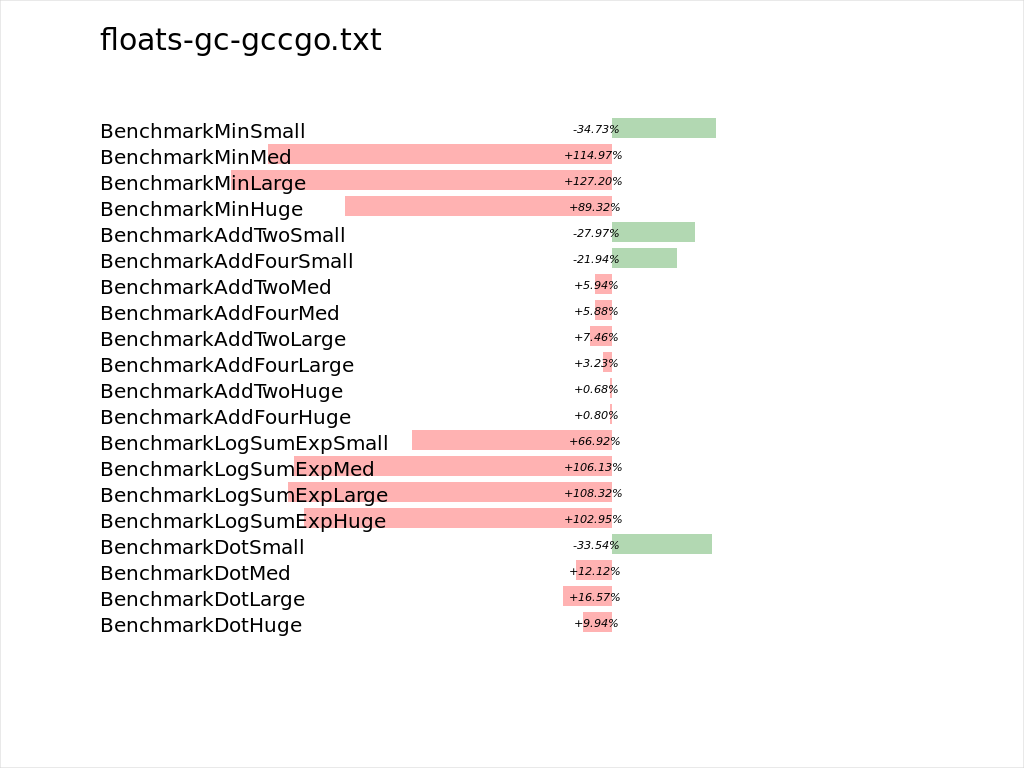

Buried among that see of red are a few telltale signs that the more capable gcc backed optimiser can eek out better arithmetic performance.

As another data point, here is the floats benchmark from my autobench suite, run under the same conditions as above.

I want to be clear that these are very preliminary results, and, like all all micro benchmarks are subject to interpretation.

I also want to stress that I am not dismissing gccgo based on these results. As I understand it gccgo lacks a few key features, such as escape analysis, which is probably responsible for most of the performance loss when the amount of computation is dwarfed by memory bookkeeping.

gccgo is developed largely by one person, ian Taylor, and is a significant achievement. Recently he and Chris Manghane have been working on decoupling the gofrontend code from gcc so it can be reused with other compiler backends, LLVM being the most obvious.

If you are interested in contributing to Go, please don’t forget about gccgo, or even llgo as possible outlets for your energies. Go itself is a stronger language because we have at least four implementations of the specification. This helps keep the compiler writers honest and avoids the language being defined by default by its most popular implementation.

> Go itself is a stronger language because we have at least four implementations of the specification

sorry… four? gc, gccgo, llgo. what’s the fourth implementation ?

-s

The fourth implementation is the SSA interpreter built by Robert Griesemer and Alan Donovan. It’s not a compiler as it does not produce executables, but it does interpret and execute Go code according to the specification.

Does red mean gccgo was faster than gc, or the other way around?

Red means that the gccgo compared to gc took longer to execute the benchmark, or had lower throughput.

how cow. gc is kicking some serious butt. that is awesome. did you have to pass any tuning flags to gc to get it this fast? (-O3 or equivalent?)

> did you have to pass any tuning flags to gc to get it this fast? (-O3 or equivalent?)

Nope, gc has optimization flags, but they are always enabled by default. If you wanted to do some experiments use -N -l to disabled the optimisation, escape analysis and inlining passes.

Dave used benchviz for the illustrations. For more information, see: http://mindchunk.blogspot.com/2013/05/visualizing-go-benchmarks-with-benchviz.html